작년 회사 지원으로 연세대학교 AI 교육을 금요일마다 7주간 수료했습니다.

당시 가장 흥미롭게 다루었던 프로젝트 주제인 'Chain of Thought(CoT)'에 대해 정리하려고 합니다.

발표 담당자로서 복잡한 개념을 최대한 쉽게 풀어서 설명하려 노력했던 내용들을 핵심 위주로 공유하겠습니다.

🧘 Chain-of-Thought (CoT) 가 무엇인가?

LLM이 단순히 답만 찾아내는 것에서 그치지 않고, 논리적 사고를 할 수 있도록 돕는 방법론입니다.

아래 그림에서 LLM에게 풀이과정을 쓰도록 유도했더니, 기존에 틀렸던 답을 맞추며 성능 향상을 보여줍니다.

인류가 AI에게 기대하는 것은 단순히 학습된 내용을 그대로 내뱉는 것이 아닙니다.

인류의 미제 문제를 언젠가는 해결해주길 기대하 수조 원 씩 투자하고 있는 것입니다.

그런 관점에서 봤을 때, 단순해 보이는 이 방법론이 그 해답의 실마리가 될 수 있을 것으로 기대됩니다.

https://arxiv.org/abs/2201.11903

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models

We explore how generating a chain of thought -- a series of intermediate reasoning steps -- significantly improves the ability of large language models to perform complex reasoning. In particular, we show how such reasoning abilities emerge naturally in su

arxiv.org

✨ Self-consistency (자기 일관성)?

자 생각해봅시다. LLM의 결과는 결국 확률에 의해서 변경될 수 있는 값입니다. 똑같은 입력값을 넣었을 때 다양한 답변이 도출될 수 있습니다.

그럼 여러 번 입력한 후 복수 개의 답변을 받고, 투표로 결과를 결정하는 방법도 생각해볼 수 있을 것입니다.

그것이 Self-consistency 방법입니다.

아래 그림과 같이 Greedy decode 방법으로는 한 경로만 탐색하기 때문에 상대적으로 실수에 취약한 모습을 보입니다.

다양한 경로를 탐색하고, 가장 많이 등장하는 답 즉 자기 일관성이 높은 답을 최종으로 집계하는 방법론입니다.

https://arxiv.org/abs/2203.11171

Self-Consistency Improves Chain of Thought Reasoning in Language Models

Chain-of-thought prompting combined with pre-trained large language models has achieved encouraging results on complex reasoning tasks. In this paper, we propose a new decoding strategy, self-consistency, to replace the naive greedy decoding used in chain-

arxiv.org

🤖 기계가 결과를 판단한다?

Self-consistency 방법은 만능이 아닙니다.

만약 모델의 기본 성능이 너무 낮아 다수의 샘플이 오답을 생성하면, 오답이 결과로 도출되게 됩니다.

그래서 답변에 대한 정확도를 평가하는 모델이 고안되었습니다.

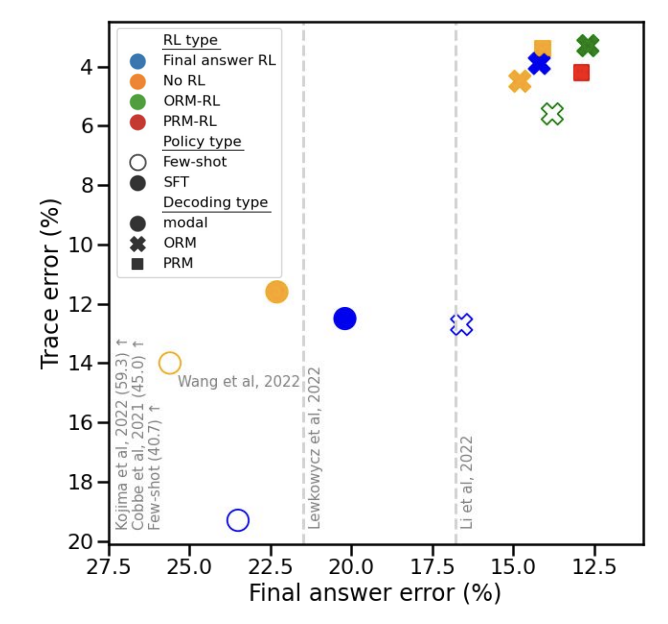

- ORM (Outcome Reward Model): 결과를 모델이 판단해 점수가 가장 높은 샘플을 선택합니다.

- PRM (Process Reward Model): 각 단계를 개별 에피소드로 취급하며, 각 단계 마다 모델이 점수를 측정합니다. (지금까지의 단계들이 정확한가를 평가)

논문의 내용으로는 PRM, ORM 방법을 통해 오류율을 유의하게 줄였다고 합니다.

또한 이 방법이 인간의 사고 방식을 따르기 때문에 특히 복잡한 추론(수학, 코딩)에서 효율성이 극대화되었다고 합니다.

https://arxiv.org/abs/2211.14275

Solving math word problems with process- and outcome-based feedback

Recent work has shown that asking language models to generate reasoning steps improves performance on many reasoning tasks. When moving beyond prompting, this raises the question of how we should supervise such models: outcome-based approaches which superv

arxiv.org

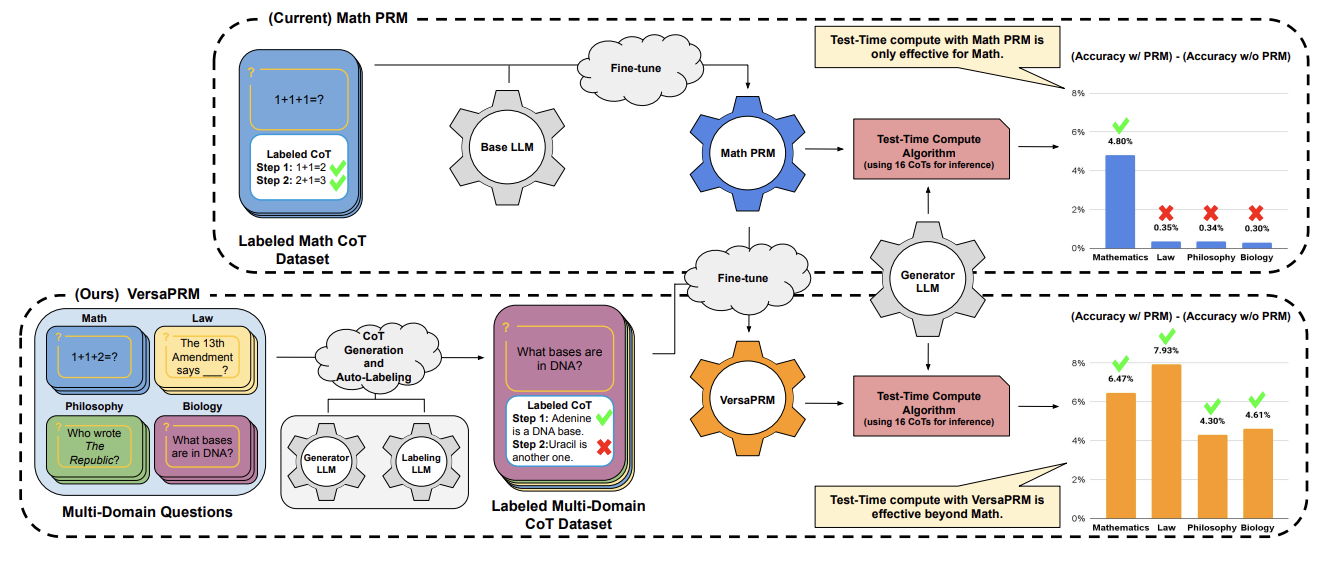

위 논문에서 발표한 PRM 모델이 수학 문제에만 특화되어있다보니, 다양한 도메인에는 취약한 모습을 보였습니다.

그러나 그 후 발표된 Versa PRM은 여러 도메인을 학습 시킴으로써 이를 해결했습니다.

이 연구는 PRM이 여러 도메인에서 두각을 드러낼 수 있다는 것을 시사했습니다.

https://arxiv.org/abs/2502.06737

VersaPRM: Multi-Domain Process Reward Model via Synthetic Reasoning Data

Process Reward Models (PRMs) have proven effective at enhancing mathematical reasoning for Large Language Models (LLMs) by leveraging increased inference-time computation. However, they are predominantly trained on mathematical data and their generalizabil

arxiv.org

🤔 위 방법들의 문제점은?

위 방법들을 따라가다보면 점점 더 인간의 사고 방식에 가까워 지는 것이 느껴집니다.

하지만 비용 문제가 존재합니다.

고성능 LLM 하나를 서빙하는 것도 부담인데, CoT의 특성상 k개의 샘플을 추출하고 여기에 과정마다 점수를 매길 PRM 모델까지 별도로 운영해야 한다면 이는 시간적/자원적 비용 문제가 발생할 것입니다.

교육을 들으면서도 이 흥미로운 방법을 어떻게 실무 환경에 녹여낼 수 있을까에 대한 고민을 했습니다.

그 문제를 해결하려는 시도를 했던 것이 연구 주제였습니다. (물론 시간이 부족해 완벽한 해결은 못 했지만요 😅)

🔥 그럼에도 불구하고

논리적 추론은 현재 LLM을 평가하는 방식입니다.

HuggingFace Dashboard에서 모델을 평가하는 지표를 보면 reasoning을 평가하는 데이터셋이 다수 있습니다.

그만큼 현재 모델에서 중요한 부분임을 반증하는 것으로 볼 수 있습니다.

https://huggingface.co/docs/leaderboards/open_llm_leaderboard/about#tasks

About · Hugging Face

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

huggingface.co

통계학을 전공한 입장에서, 한동안 딥러닝의 부상을 복잡한 심경으로 지켜보았습니다.

통계학은 현상의 발생 요인을 모델링하고 각 변수의 경향성을 파악하는 해석의 학문인 반면, 딥러닝은 뛰어난 예측력에 비해 그 내부 기제는 알 수 없는 블랙박스였기 때문입니다.

시장은 해석보다 예측의 효율성에 손을 들어주었지만, 전문가로서의 갈증은 여전했습니다.

그런데 CoT를 통해서라면 "AI가 왜 이런 답을 내놓았는가?"에 대한 해답을 줄 수 있습니다.

이를 통해서 딥러닝의 고질적인 문제를 해결할 수 있으며, 저와 같은 통계적 사고를 하는 이들도 납득할 수 있는 설명 가능한 신뢰성을 가지게 되었습니다.

특히 의료, 법률, 금융 등 근거가 생명인 전문 분야에서 CoT는 단순한 기술을 넘어 핵심적인 안전장치 역할을 수행할 것입니다.

사실 비용과 시간 문제는 하드웨어 가속이나 모델 소형화 기술로 점차 해결될 수 있는 문제라고 생각합니다.

하지만 논리적으로 생각하는 능력은 대체 불가능한 가치라고 생각되며, 앞으로 어떻게 방향이 흘러갈지 기대가 됩니다.

'AI' 카테고리의 다른 글

| 요즘 우아한 AI 개발 - AI 서비스를 우아하게 개발하는 노하우 (0) | 2026.04.07 |

|---|---|

| AI 에이전트 엔지니어링 - 비결정적 AI를 제어하는 법 (0) | 2026.03.26 |

| ColabFold: 알파폴드2 모델 실행과 해석 (0) | 2026.03.11 |

| IT 엔지니어가 읽은 《알파폴드: AI 신약개발 혁신》 (0) | 2026.03.04 |

| MLOps Engineer가 보는 딥시크(DeepSeek)에 대한 생각 (0) | 2025.02.01 |